NVIDIA has introduced a new frontier in AI performance with the launch of the DeepSeek-R1 model family, setting a new standard for reasoning, problem-solving, advanced mathematics, and code generation—all from the comfort of personal computers. Paired with the powerful GeForce RTX 50 Series GPUs, DeepSeek-R1 delivers unmatched inference speeds, making cutting-edge AI accessible without the need for cloud computing.

A New Era of Reasoning Models

Reasoning models represent a revolutionary shift in large language models (LLMs). Unlike traditional AI models that rely solely on pre-learned data, reasoning models simulate human-like thinking processes, allowing them to work through complex, multi-step problems with greater accuracy. This method, known as test-time scaling, dynamically allocates computing resources based on the complexity of the task, enhancing performance as the model “thinks” longer about a problem.

With these advanced capabilities, users can perform tasks like:

- Market analysis with detailed data interpretation

- Advanced math problem-solving beyond basic calculations

- Code debugging and generation for developers

- Personalized AI agents that learn from user feedback

The DeepSeek Advantage

At the heart of this breakthrough is the DeepSeek-R1 model family, derived from a massive 671-billion-parameter mixture-of-experts (MoE) model. MoE models excel by dividing tasks among specialized “expert” models. DeepSeek has distilled this intelligence into six smaller, more efficient student models, ranging from 1.5 to 70 billion parameters.

Through a process called distillation, the reasoning power of the original DeepSeek model has been transferred to more compact models like Llama and Qwen, optimized for local performance on RTX AI PCs. This means you get near-data-center AI power directly on your desktop or laptop.

Peak Performance with GeForce RTX 50 Series

The GeForce RTX 50 Series GPUs are engineered to handle the heavy lifting required by modern AI models. Powered by the NVIDIA Blackwell GPU architecture and equipped with fifth-generation Tensor Cores, these GPUs maximize AI processing capabilities, offering:

- Blazing-fast inference speeds for real-time results

- Low-latency processing even without an internet connection

- Enhanced privacy by keeping data local, eliminating the need to upload sensitive information to the cloud

AI Without Boundaries: Experience DeepSeek on RTX

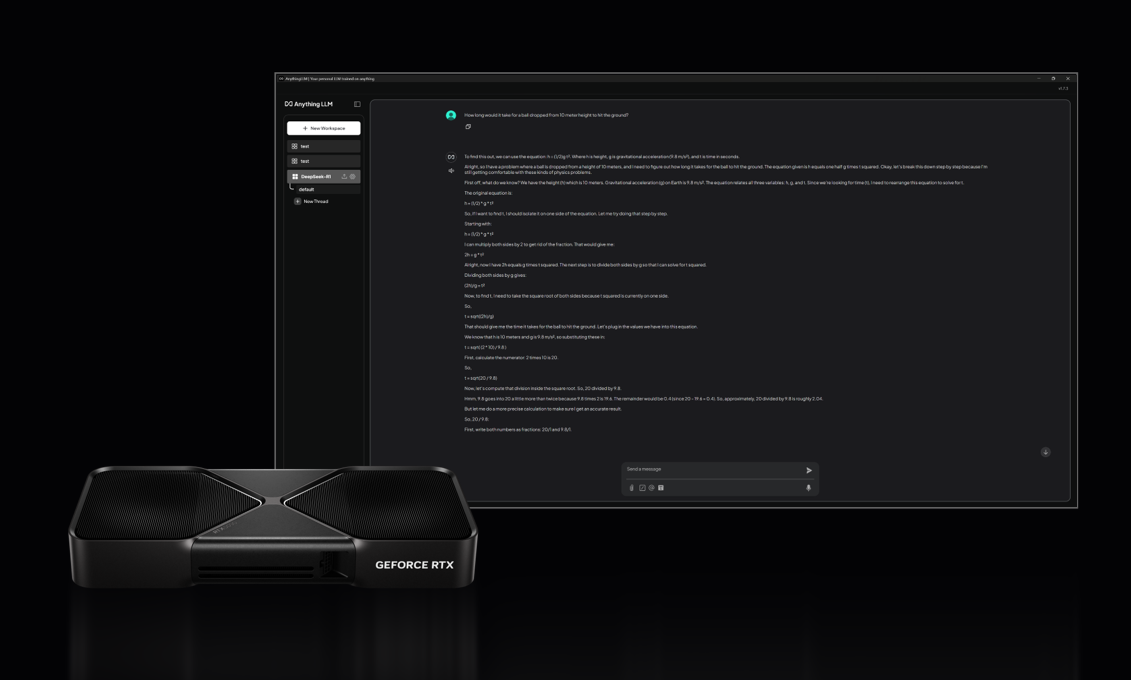

NVIDIA’s RTX AI platform supports DeepSeek-R1 across more than 100 million RTX AI PCs worldwide, making AI accessible to enthusiasts, professionals, and developers alike. Users can experience DeepSeek’s power through popular tools like:

- Llama.cpp, Ollama, LM Studio, AnythingLLM, Jan.AI, GPT4All, and OpenWebUI for AI inference

- Unsloth for fine-tuning models with custom datasets

These tools allow for offline AI capabilities, ensuring that high-performance AI is always within reach, with the added benefit of increased privacy and security.

The Future of AI is Here

With DeepSeek-R1 and GeForce RTX 50 Series, NVIDIA is not just accelerating AI—it’s redefining what’s possible on personal devices. Whether you’re solving complex problems, creating innovative applications, or exploring new frontiers in AI development, the combination of DeepSeek-R1’s reasoning models and RTX’s unmatched processing power is set to revolutionize the way we interact with technology.